The Bridge That Never Breaks — gRPC Streaming in a Hybrid Offline World

The Bridge That Never Breaks — gRPC Streaming in a Hybrid Offline World

I was sitting in a meeting room with a whiteboard full of arrows pointing everywhere.

A retail chain. 40+ stores. Each store had a POS terminal, a barcode scanner system, and a locally-installed inventory manager — all running offline-first, because the internet in half those stores was about as reliable as a weather forecast.

But the head office? It needed live sales data. Live stock levels. Real-time alerts when a store ran out of something.

The question on the board was brutal in its simplicity:

"How do we make offline systems talk to a cloud platform — reliably, in real time, without losing a single event?"

I had tried REST webhooks. I had tried polling. I had tried a message queue that the offline nodes couldn't reliably reach.

Then I stopped trying to force online patterns onto offline systems — and started building a bridge.

That bridge was gRPC Streaming.

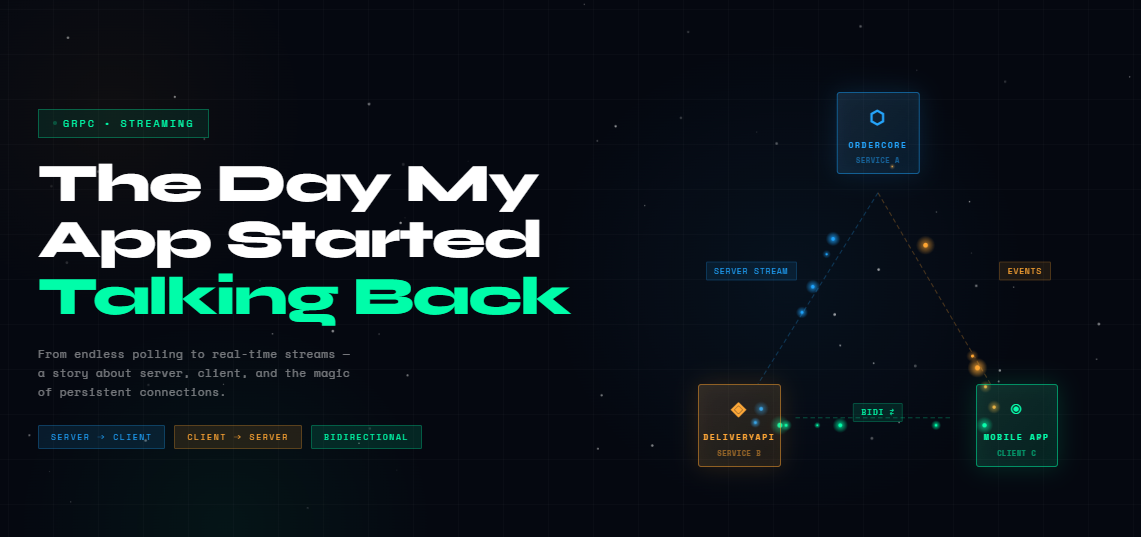

The Cast

Three players in this story:

Service A - SyncEngine: The cloud brain. Receives data, dispatches commands, tracks which offline node sent what.

Service B — BridgeAPI: The on-premise relay. Runs locally in each store. Buffers events when offline, streams them when connected.

Client C — Offline Systems: The POS terminal, the inventory scanner, the legacy ERP. They only talk to BridgeAPI — they don't know the cloud exists.

[ POS / Scanner / ERP ] → BridgeAPI (on-premise) ⇄ SyncEngine (cloud)

Client C Service B Service AThe key insight: Client C never needs to be online. It hands everything to BridgeAPI. BridgeAPI is the one that maintains the gRPC stream to the cloud — and handles reconnections, retries, and buffering transparently.

The Problem With "Just Use REST"

Have you ever set up a REST webhook from an on-premise system to a cloud endpoint — and then watched it silently fail every time the network hiccuped?

No retry. No confirmation. No way to know if the payload landed.

My first attempt looked like this:

// BridgeAPI — the naive approach

public async Task PushSaleEvent(SaleEvent sale)

{

using var http = new HttpClient();

// What happens if this times out? Or the cloud is down for 30 seconds?

// The event is just... gone.

await http.PostAsJsonAsync("https://cloud.retailhq.com/api/sales", sale);

}A store's internet goes down for 3 minutes during a busy Saturday. Comes back. Nobody knows what was missed. The cloud thinks the store sold nothing. The head office panics.

I needed a system where going offline was expected and handled — not a failure mode.

What gRPC Streaming Changes

Quick grounding: gRPC runs over HTTP/2. That means:

One persistent connection — not a new TCP handshake per message Multiplexed streams — multiple messages flying simultaneously Binary Protocol Buffers — no JSON bloat, faster serialization Built-in flow control — the sender can't flood the receiver

And most importantly for offline systems: when the connection drops and comes back, you just re-establish the stream and continue. Your buffer stayed intact. Nothing was lost.

Three streaming patterns. Each solves a different piece of the offline puzzle.

Chapter 1 — Server-to-Client Streaming: "Cloud Speaks, Store Listens"

Did you know that most "configuration sync" systems are just polling an endpoint every 60 seconds and hoping nothing changed in between?

With server-side streaming, SyncEngine (Service A) pushes commands to BridgeAPI (Service B) the instant something changes — price updates, promotion activations, inventory alerts. No polling. No lag.

Scenario: Head office updates a product price. Every online store needs to know immediately.

// sync.proto

service SyncEngine {

rpc WatchCommands(StoreHandshake) returns (stream CloudCommand);

}

message StoreHandshake { string store<em>id = 1; }

message CloudCommand {

string command</em>id = 1;

string type = 2; // "PriceUpdate", "PromoActivate", "StockAlert"

string payload<em>json = 3;

}// Service A — SyncEngine (cloud, pushes commands down to stores)

public override async Task WatchCommands(

StoreHandshake request,

IServerStreamWriter<CloudCommand> stream,

ServerCallContext context)

{

Console.WriteLine($"Store {request.StoreId} connected. Streaming commands...");

// In production: subscribe to a Redis channel or EF change events

await stream.WriteAsync(new CloudCommand

{

CommandId = "cmd-101",

Type = "PriceUpdate",

PayloadJson = """{"sku":"ITEM-42","newPrice":299.00}"""

});

await stream.WriteAsync(new CloudCommand

{

CommandId = "cmd-102",

Type = "PromoActivate",

PayloadJson = """{"promoCode":"SUMMER10","discount":10}"""

});

// Stream stays open — more commands flow as they happen

await Task.Delay(Timeout.Infinite, context.CancellationToken);

}// Service B — BridgeAPI (on-premise, receives commands, applies them locally)

public async Task ListenToCloud(string storeId)

{

var client = new SyncEngine.SyncEngineClient(</em>channel);

using var call = client.WatchCommands(new StoreHandshake { StoreId = storeId });

await foreach (var cmd in call.ResponseStream.ReadAllAsync())

{

Console.WriteLine($"[{cmd.Type}] received → applying locally");

// Apply to local POS / inventory system (Client C never talks to cloud directly)

await <em>localSystemBridge.ApplyCommand(cmd.Type, cmd.PayloadJson);

}

}The moment the BridgeAPI reconnects after an outage, the stream resumes. Any commands queued in SyncEngine during the downtime drain instantly.

The store was offline for 20 minutes. The moment it reconnected — 3 queued price updates landed in under a second.

Chapter 2 — Client-to-Server Streaming: "Store Reports, Cloud Collects"

What if it's the other way around? The store has been offline for an hour, racking up sales events, stock movements, and till closures. The moment connectivity returns, BridgeAPI needs to flood the cloud with everything it buffered — in order, without re-opening a connection for each event.

What if you had 200 stores each trying to POST 500 buffered events over REST the moment they all reconnected simultaneously? Your cloud endpoint would be on its knees.

Client-to-server streaming solves this elegantly — one stream per store, events flow continuously until the buffer is drained.

service SyncEngine {

rpc UploadBufferedEvents(stream StoreEvent) returns (UploadSummary);

}

message StoreEvent {

string event</em>id = 1;

string store<em>id = 2;

string type = 3; // "Sale", "StockMovement", "TillClose"

string payload = 4;

int64 timestamp = 5; // Unix ms — when it actually happened offline

}

message UploadSummary {

int32 accepted = 1;

int32 rejected = 2;

}// Service B — BridgeAPI (drains local buffer to cloud on reconnect)

public async Task FlushOfflineBuffer(string storeId)

{

var buffered = await </em>localBuffer.GetPendingEvents(storeId);

if (!buffered.Any()) return;</p><p> var client = new SyncEngine.SyncEngineClient(<em>channel);

using var call = client.UploadBufferedEvents();

foreach (var evt in buffered)

{

await call.RequestStream.WriteAsync(new StoreEvent

{

EventId = evt.Id,

StoreId = storeId,

Type = evt.Type,

Payload = evt.Payload,

Timestamp = evt.OccurredAt.ToUnixTimeMilliseconds()

});

Console.WriteLine($"⬆ Flushing: [{evt.Type}] from {evt.OccurredAt:HH:mm:ss}");

}

await call.RequestStream.CompleteAsync();

var summary = await call;

Console.WriteLine($"Upload complete — {summary.Accepted} accepted, {summary.Rejected} rejected");

await </em>localBuffer.MarkFlushed(storeId);

}// Service A — SyncEngine (cloud ingests the stream, deduplicates, stores)

public override async Task<UploadSummary> UploadBufferedEvents(

IAsyncStreamReader<StoreEvent> requestStream,

ServerCallContext context)

{

int accepted = 0, rejected = 0;

await foreach (var evt in requestStream.ReadAllAsync())

{

// Idempotency check — the same event<em>id may arrive twice if stream was interrupted

if (await </em>eventStore.AlreadyExists(evt.EventId))

{

rejected++;

continue;

}

await <em>eventStore.SaveAsync(evt);

accepted++;

}

return new UploadSummary { Accepted = accepted, Rejected = rejected };

}Notice the idempotency check. When an offline system reconnects mid-flush, the stream might restart from the beginning of the buffer. Without deduplication, you'd count the same sale twice. With it, you're bulletproof.

Chapter 3 — Bidirectional Streaming: The Handshake That Never Drops

This is the pattern that made the whole thing click for me.

Have you ever had a critical event — say, a till discrepancy or a stock shortage — get lost because the system that sent it had no way to know if the cloud received it?

Bidirectional streaming gives you a live, two-way handshake. BridgeAPI sends events. SyncEngine sends ACKs. If an ACK doesn't arrive, the event gets retried. Automatically. Silently. Nobody loses data.

Scenario: BridgeAPI streams real-time operational events (sales, alerts, errors) to SyncEngine. For each one, SyncEngine acknowledges. If the stream drops mid-session, on reconnect the BridgeAPI retries only the unacknowledged events from its local log.

service SyncEngine {

rpc OperationalChannel(stream StoreEvent) returns (stream EventAck);

}

message EventAck {

string event</em>id = 1;

bool ok = 2;

string reason = 3; // populated if ok = false

}// Service A — SyncEngine (processes events, ACKs each one)

public override async Task OperationalChannel(

IAsyncStreamReader<StoreEvent> requestStream,

IServerStreamWriter<EventAck> responseStream,

ServerCallContext context)

{

await foreach (var evt in requestStream.ReadAllAsync())

{

Console.WriteLine($"[{evt.StoreId}] {evt.Type} at {DateTimeOffset.FromUnixTimeMilliseconds(evt.Timestamp):HH:mm:ss}");

try

{

await <em>processor.HandleAsync(evt);

await responseStream.WriteAsync(new EventAck { EventId = evt.EventId, Ok = true });

}

catch (Exception ex)

{

// NACK — BridgeAPI will retry

await responseStream.WriteAsync(new EventAck

{

EventId = evt.EventId,

Ok = false,

Reason = ex.Message

});

}

}

}// Service B — BridgeAPI (sends events, tracks ACKs, retries NACKs)

public async Task StartOperationalChannel(string storeId)

{

var client = new SyncEngine.SyncEngineClient(</em>channel);

using var call = client.OperationalChannel();

// Task 1: Receive ACKs and mark events as confirmed

var ackListener = Task.Run(async () =>

{

await foreach (var ack in call.ResponseStream.ReadAllAsync())

{

if (ack.Ok)

{

await <em>localBuffer.MarkConfirmed(ack.EventId);

Console.WriteLine($"ACK: {ack.EventId}");

}

else

{

Console.WriteLine($"NACK: {ack.EventId} — {ack.Reason}. Will retry.");

// Event stays in local buffer — retried on next reconnect

}

}

});

// Task 2: Stream live events as they come from Client C (POS / scanners)

await foreach (var evt in </em>localEventQueue.ReadAllAsync())

{

await call.RequestStream.WriteAsync(new StoreEvent

{

EventId = evt.Id,

StoreId = storeId,

Type = evt.Type,

Payload = evt.Payload,

Timestamp = evt.OccurredAt.ToUnixTimeMilliseconds()

});

}

await call.RequestStream.CompleteAsync();

await ackListener;

}What this gives you: Every event has a paper trail. BridgeAPI knows exactly which events reached the cloud and which didn't. On the next reconnect, only the unconfirmed ones are retransmitted. Your cloud data is always complete — even if the store was offline for hours.

The network can go down. The power can flicker. As long as the local buffer survives, not a single sale is lost.

The Part Nobody Tells You

The dirty secret of hybrid offline systems isn't the streaming — it's the local buffer.

Without a reliable local event log at BridgeAPI, none of this works. Before you write a single line of gRPC code, answer these questions:

Where does BridgeAPI store events when the cloud is unreachable? (SQLite, LevelDB, a simple append-only file)

How does it know which events were ACKed and which weren't?

How does it handle clock skew between the offline device and the cloud?

Get the buffer right, and gRPC streaming becomes simple. Get it wrong, and you'll have a fast pipe delivering inconsistent data.

After the Whiteboard

That room full of arrows eventually became a working system. Forty-something stores. Some with sketchy internet. Some that go fully dark for maintenance windows.

When they come back online, BridgeAPI reconnects, streams the buffer, waits for ACKs, and clears the log. The cloud sees everything — in the order it happened, with the exact timestamp it happened — regardless of when the data actually arrived.

The head office stopped asking "why is the data missing?" They started asking "can we get more of it?"

That's when you know the architecture is working.

If you're building anything that touches the real world — field devices, retail systems, factory floor machines, hospital equipment — ask yourself:

Is my cloud integration assuming the network is always there? And what happens when it isn't?*

Build the bridge first. Then let gRPC streaming carry everything across it.

Tags

Subscribe to Newsletter

Get notified about new articles

Related Articles

Integrating eSewa, Khalti & Dynamic FonePay QR in .NET

Complete guide to integrating eSewa, Khalti and FonePay Dynamic QR in .NET with code examples....

Integrating eSewa, Khalti & Dynamic FonePay QR Gateways in .NET

Just specify the version — the package handles the rest. No need to rewrite your entire codebase whe...